(Instruction to the reader: if you are not interested in delving deep into the technical aspects of how normal ranges are calculated or about a test’s specificity and sensitivity, skip the italicized parts)

Anil, a 30-year-old accountant, was a very worried man. His bilirubin was found elevated on a routine blood test done by his employer. Although he had a healthy lifestyle and never felt ill, he became concerned that he had serious liver disease. Anil sought the opinions of his friends and relatives, searched the internet, and went to an indigenous practitioner who diagnosed him with ‘jaundice’. Anil was told that he had liver disease because his bilirubin was above normal, and was prescribed some herbal remedies ‘to bring the levels down’.

Initially, the bilirubin levels seemed to come down with this herbal treatment, and Anil was relieved. But after several rounds of purported treatment and self-directed testing, his bilirubin level remained elevated. Distraught, he eventually consulted a qualified physician who performed a few basic investigations, and diagnosed Anil with Gilbert’s syndrome, a benign (harmless) condition, requiring no treatment.

Read: Everyday Health | Love Kerala food? Here's a diet plan tailored for you

The doctor explained that though the bilirubin level will fluctuate with time, it would never affect his health; and Anil’s out-of-range values did not mean that he had liver disease. A lower reading could happen randomly as part of this fluctuation – and get interpreted as ‘cure’ by the anxious patient, only to be followed by anxiety when the value went up again.

Moral of the story: Not all lab results need to be ‘within normal range’ for a person in good health, and not all abnormal lab values mean there is disease. A lab value is only a small part of a large puzzle, which, when seen individually, could mean many things, but when pieced together with the patient’s history, physical examination and other pertinent tests, will yield the big picture.

Read: Everyday Health | Medical hoaxes: if it sounds too good to be true, it probably is

This article describes how a reference range is created for lab tests, and analyzes the fallacies in ordering and interpreting them. It explains how the same test result might have different implications for different patients. Also included is a plain English introduction to essential biostatistics.

How can a lab result be ‘out of range’ in good health?

Lab values are parameters described by man to assess the functioning of the human body.

Hemoglobin, for example, has a normal range (also called reference interval) of 12-15 g/dl for women. A young woman, otherwise healthy, might have a haemoglobin level of 10g due to regular menstrual blood loss, but this does not necessarily mean that she is ill. She might have had the same haemoglobin for several years, and being without symptoms, this is not considered a disease requiring treatment.

Read: Everyday Health | 16 tips for a healthy, and cool, summer

In contrast, when a woman whose usual haemoglobin was 14g, is brought to casualty with history of vomiting blood, a haemoglobin value of 10g will suddenly assume great significance – that of substantial blood loss requiring immediate treatment.

Thus, even though both the hemoglobin values were the same, the interpretation was different as the clinical scenarios were different.

Likewise, an elevated bilirubin value does not necessarily indicate liver disease, and must always be interpreted in the clinical context by a qualified doctor.

A popular teaching in medical school says: “treat the patient, not the lab result”.

How exactly is normal range calculated?

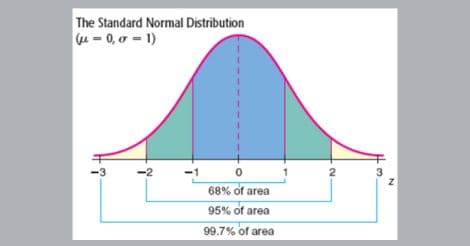

The simplest example of how to calculate normal range will be to study 100 healthy school boys of the same age (10 years) and measure their height. Some boys will be tall, others short, and the rest, in between. When these numbers are plotted on a graph, with the height value on the vertical (y) axis and the number of students on the horizontal (x) axis, it will look like an inverted bell (the so-called bell-curve), also called Gaussian distribution.

Since the height values of these 100 boys are really spread out, there is a wide gap between the tallest height value (167 cm) and the shortest (112 cm). It is rather impractical to use such a wide range of normal height (112-167 cm) for children of that age.

Read: Everyday Health | Google vs doctor: why Internet self-diagnosis is a bad idea

Figure 1. The Gaussian distribution (Bell-curve)

To make the range more useful in practice, let us now cut off the tails at both ends of the graph, eliminating the right and left extreme of the curve, that is 2.5 percent of the tallest and 2.5 percent of the shortest. Thus we have a more easy-to-use ‘normal’ range of height for that class, at 125-155 cm.

Figure 2. How to truncate (slice off) the ends of the bell curve

This process makes the normal range more realistic and practical, effectively reducing the difference between the largest value and the smallest value. This is called the 95 percent confidence interval, which basically means that if our test value falls within the range, we are 95 percent confident that the value belongs to a healthy person. It does not mean that the 5 percent who got eliminated at either end are necessarily ‘abnormal’ or have any disease.

For more complex and asymmetrical distributions, a logarithmic calculation is done to ensure the curve stays bell-shaped, but the principle of calculating normal range remains the same.

In other words, there will be a few perfectly healthy individuals at either end of the spectrum – those that do not fit into the so-called normal range. This principle applies to most lab values like hemoglobin, blood sugar, creatinine, SGOT, SGPT, electrolyte levels, scan results and so on.

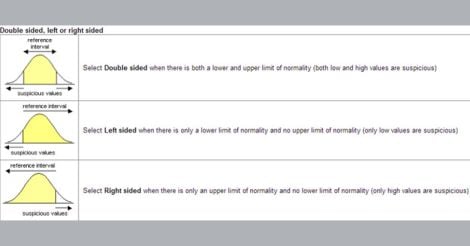

Sometimes, only one end of the range is required – e.g. for tumour markers like PSA (prostate specific antigen), when only a high value is suspicious of disease. In such instances, where a low value has no meaning, a single cut-off value will be given instead of a reference range.

Why can’t we perform all tests on everyone and look for disease?

This is a common misconception about investigations in general, be it blood tests, scans or others. Many people believe that tests are required to make a diagnosis, and that abnormal values always indicate disease - both of which are not true. Self-directed investigation is rampant in India, and frequently these people end up chasing abnormal lab results that should not have been performed in the first place.

Read: Everyday Health | Transgenders – there is much to know before judging!

In fact, not all test results become abnormal in disease, and when results are indeed abnormal, it doesn’t always mean there is disease. The following sections will explain this in some detail.

How is the cut-off value of a test determined?

Many tests have a cut-off value, above which it is considered abnormal or ‘positive’. For example, PSA is a blood test that is used to provisionally detect prostate cancer, while prostate biopsy is the confirmatory test. (It is not realistic to perform a prostate biopsy on everyone; hence we need a simpler, cheaper and safer screening test like PSA to first select those few people with high likelihood of having prostate cancer).

The higher the PSA value, there is greater chance that the patient actually has prostate cancer. But choosing a really high cut-off value would mean we will miss many cancer cases too. How to decide the optimal cut-off value for normal vs. abnormal PSA then?

Let us look at two scenarios, where we perform the PSA test in a group of middle-aged men consisting of a few cases of prostate cancer and a large number of healthy people. Our task is to correctly identify those men with prostate cancer.

Scenario 1

If we decide to keep a high cut-off value of 20, then all people whose PSA is above that value (positive test) will definitely have prostate cancer. This is because healthy people rarely have such high PSA values. However, keeping the cut-off value so high will miss out a few patients who actually do have prostate cancer, but with not-so-high PSA values. In these patients, even though they have prostate cancer, their PSA results get reported as ‘normal’ as their test value is less than 20. (This is also termed under-diagnosis, or false-negative tests).

Read: Everyday health | Noise kills: this is scarier than you thought

Scenario 2

If we decide to keep a lower cut-off value of 2, then many more people will have a so-called ‘positive’ PSA result. On the one hand, this will detect all of the prostate cancer cases in the group, as it would be very unlikely that prostate cancer can result in a PSA value less than 2.

Unfortunately, a few healthy people also can have PSA values near 2.5 or 3, which will get interpreted as a positive result in this case. Thus, we may wrongly label a few healthy people as having cancer by choosing a low cut-off value of 2. For the sake of confirmation, these healthy people also get subjected to a prostate biopsy which will, of course return normal (over-diagnosis, or false-positive test). The more number of false positives a test produces, the more anxiety, expense and trauma we create among these people.

For the technically minded, the above two scenarios will help illustrate two important attributes of a diagnostic test: sensitivity and specificity.

In the first instance, the test has a high specificity (defined as: when the test is performed among 100 healthy people, how many will have a normal result?). As there is no chance that a healthy person will have such a high PSA value over 20, all healthy people in the group will receive a normal report, attaining a specificity of 100 percent.

The second instance is an example of high sensitivity (defined as: when performed among 100 people with disease, how many will the test correctly diagnose?). Keeping a low cut-off value makes sure we don’t miss anyone with disease - everyone with disease will get a positive test report, thus attaining 100 percent sensitivity.

Read: Everyday health | Learn these 6 first-aid tips, be a lifesaver [ video]

While a sensitivity of 100 percent might seem good, it shows only half of the picture. What about those who are healthy and still got a positive PSA result? Doesn’t the test make them more anxious by giving them a false-positive result? This translates to a low specificity. In other words, even though the sensitivity is 100percent when we use a PSA cut off value of 2, the low specificity makes this test useless.

In the first example, though the specificity is 100 percent because of a high PSA cut-off value, the sensitivity is low as many people with disease will get a negative test result.

Read: Everyday Health | Who said death has to be unpleasant? Do these 16 things now

In most instances, as in the case above, there is a trade-off between sensitivity and specificity: the higher the sensitivity of any test, the lower the specificity will be, and vice versa.

Though both are desirable attributes for a test, no test can really have high sensitivity and specificity together. What is the solution then? The answer lies in a specialised method that helps determine the best cut-off value.

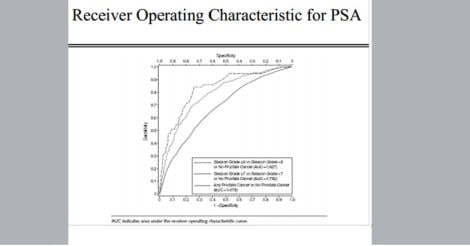

Using a statistical technique called ROC (receiver-operating characteristic curve), an optimal cut-off value is decided in such cases, so that the number of people who get under-diagnosed and over-diagnosed is kept at a minimum. An ROC curve plots the true-positive (sensitivity) vs. false positive rates (1-specificity) for different cut-off values, and calculates the area under the curve (AUC). Those tests whose AUC is closest to 1.0 have the highest accuracy.

Applying the ROC curve to the example above, a PSA cut-off value of 4 has the highest AUC. This will balance sensitivity and specificity, making it a reasonably good test - although never 100 percent accurate.

The above story illustrates how cut-off values and normal ranges are determined for lab tests.

Fig 3. An example of an ROC curve

Can the same test result produce different conclusions in different people?

The two examples quoted earlier – the bilirubin and haemoglobin level - show that an isolated abnormal lab result may not have any significance - unless applied in the right clinical setting. The following technical example will illustrate the futility of relying exclusively on lab tests to make a diagnosis.

Prostate cancer is almost exclusively a disease of older men. As discussed above, a PSA value greater than 4 is commonly associated with it. (Other conditions of the prostate such as an infection can also raise PSA).

In the following illustrative study, we are going to use PSA at a cut-off value of 4.

For the sake of a study, if we perform the PSA test on a large group of college students, we will find at least a few values that are above this cut-off value of 4. How can this be possible? (We already know that as a group, it is almost impossible for young men to have prostate cancer).

If we take all the abnormal results (‘positives’) of this test and thoroughly investigate those subjects with a prostate biopsy, they will be no evidence of cancer among them. These test results are therefore called ‘false positives’. (Their PSA might have been elevated from causes other than cancer)

Now let us run this test on a group of men over age 70, who are a high risk group for prostate cancer. A few tests will come back positive: that is, PSA value greater than 4. If we take those people who have a positive PSA test and then investigate with a prostate biopsy (the confirmatory test, or the gold standard test), several of them will indeed have prostate cancer. These are called ‘true positives’.

It is thus obvious that a test result, when positive, need not always indicate the presence of disease. The ability of a test, when positive, to correctly predict disease is called Positive Predictive Value or PPV (defined as: out of a 100 positive test results, how many really had disease?).

The difference between PPV and sensitivity is that PPV is measured from the viewpoint of the test result, while sensitivity (and specificity) is measured from the perspective of the study population.

From this experiment, it is clear that the positive predictive value of the same test can vary depending on how likely the study group is to have the disease. When performed in a high prevalence group (older men), the positive predictive value is higher – the positive test results correctly predicting a cancer diagnosis in most cases. (Prevalence is a measure of how commonly a given condition is present in a given population)

In contrast, when performed in a low-probability group (men in their 20’s, who never get prostate cancer), the Positive Predictive Value of PSA test was much lower - close to zero. None of the positive test results in this group were in fact due to cancer. Doing this test in such a group also created unnecessary anxiety and further investigations in those few individuals who had false positive result.

Even a very good test, (with both sensitivity and specificity of 85 percent) will only have a Positive Predictive Value of 5 percent when the disease prevalence in that population is low at 1 in 1000. (Which means, when the test is performed in this population, among 100 positive test results, only 5 will really have disease)

But the Positive Predictive Value of the same test increases 8-fold to 40 percent if the disease happens to be more common - when prevalence rises to 1 in 10. A PPV of 40 percent means that 40 percent of the positive test results in this particular population will detect actual disease.

In a lighter vein, there is a popular Birbal story that illustrates this principle. Birbal was emperor Akbar’s wise counsel. Once while walking outside Akbar’s palace on a moonlit night, Birbal spotted a man anxiously searching for something on the ground. When Birbal inquired what he was searching for, the man said he had lost his gold ring, and was searching for it. Bribal asked him where he thought he’d lost the ring. The man pointed to a faraway mango tree, and said: “I lost the ring under that tree.”

Birbal was Akbar's wise cousel

Birbal was Akbar's wise couselSurprised, Birbal asked the man: “Then why are you searching here, instead of looking underneath that tree?”

The man replied: “But sir, it is dark underneath the tree. It is brighter here, so I can search more easily”

The principle of ordering lab tests isn’t very different from this foolish man’s act. When we order a lab test where the chance of disease is small, the chance of obtaining an inaccurate or misleading result is high. It is better to focus one’s attention to the area where the probability of disease is high (just like in the story above: the probability of finding the ring was highest underneath the tree).

The PSA test is used here as a basic example to illustrate the complex topic of ordering and interpreting investigations. The same principles apply to scans, other blood tests, ECG’s and X-rays.

The following are some of the basic questions to consider before ordering a lab test.

1. Why is the test being ordered?

2. Is the expense justifiable?

3. Can the test reliably diagnose disease?

4. What are the consequences of not ordering the test?

5. Are the test results being interpreted by a qualified person, in the right context?

6. Will the test results influence the treatment of the patient?

The most important question: Why is the test being ordered?

The practice of good medicine involves taking a detailed history first, examining the patient, forming a diagnosis and then administering treatment. The vast majority of the lab and radiological tests are to be ordered only if required – that is, to rule out or confirm a diagnosis that is deemed likely by the doctor.

In addition, a few tests might be required for monitoring certain treatments or diseases, and still fewer, for age-appropriate screening as directed by scientific society guidelines.

However, with healthcare becoming an increasingly unregulated industry, the original physician’s thought process that drove intelligent investigation is often missing. Instead of following a general practitioner’s educated advice about their health, people now approach healthcare facilities, almost like they would stroll into a supermarket with a shopping cart. From the displayed menu, with some assistance from the clerk at the desk, they choose a ‘package’ of tests for a fee, and then voluntarily undergo batteries of tests variously called ‘health package’ or ‘routine’ tests.

These tests can be quite extensive at times and often unwarranted, being of low positive predictive value due to the reasons described above. Naturally, some of these test results will be out of range even in healthy people, (as in Anil’s case), and the person may eventually suffer more harm than good.

What are some of the drawbacks of excessive lab testing?

When lab tests are ordered without a clinical indication, false-positive results invariably show up. The patient is then forced to undergo more detailed investigations to exclude serious illness, like in the example above. While the initial test itself might not be costly, the downstream expenses and the associated stress can be substantial. Downstream costs can be due to further confirmatory lab tests, hospitalizations, scans, biopsies, other surgical procedures, and treatment costs of any complications that might follow such intervention.

Aren’t there any good sides for large-scale testing of healthy people?

On the good side, a silent tumour might occasionally get diagnosed at an early stage using a scan – that is, even before the patient develops symptoms. However, because such instances are exceedingly rare, thousands of healthy people will have to be scanned before one such tumour can be discovered. Therefore, the universal usage of scans cannot be justified for this purpose.

Large scale testing also leads to early detection of diabetes and high cholesterol; this can help tailor lifestyle advice - although these are best checked as directed by a qualified doctor at appropriate intervals. Screening for illnesses is an elaborate topic in itself, and will be discussed at another time.

How common is excessive lab testing, and what drives it?

Researchers from Harvard University found that about 40 percent of tests ordered worldwide are unnecessary (overutilization during initial testing). Confirming the lack of proper reasoning behind such decisions, the reverse was also true: the right tests are not ordered when necessary in a similar number of cases (underutilization).

Medicine is not an exact science, and not all diseases have symptoms described in the text-book. Though a diagnosis can be made just from history and physical examination in many instances, some doctors tend to over-order tests from the fear of ‘missing something’, or ‘just in case’.

Chasing the zebra: The classic example of applying common sense in making a diagnosis is taught in medical school with the following example: “When you hear the sound of hooves outside your window, you are better off thinking it is a horse, rather than a zebra”. Though they are aware that a ‘rare diagnosis is rarely correct’, the undue emphasis placed on rare diseases and syndromes during medical training as well as scientific conferences, prompt at least some doctors to order esoteric investigations as a habit. They reckon that although the possibility of that diagnosis might be rare in a large population, it can be impossible to say without testing whether that single patient is an exception to the rule. When this process repeats with other patients, more tests get ordered than necessary, and the number of false-positives also increases.

The increasingly litigious environment in healthcare does prompt many providers to perform unnecessary tests just in case such a rare diagnosis can be missed. Pressure from patients’ side is not uncommon either - many patients express discontent if the doctor does not order an investigation as part of clinical assessment.

In India, the situation is worse, particularly for those who can afford and routinely undergo self-directed, lab-directed and ‘employer-directed’ testing. This generates a lot of inappropriate lab results, as none of those tests would have a doctor’s clinical reasoning behind it.

What is a solution?

Not only are lab tests increasingly over-ordered, but the right tests are not ordered when necessary either. We need to put the brain back into the system. There is no such thing as a ‘routine’ test. The need of the hour is to create and follow evidence-based protocols for investigations appropriate for the patient’s clinical setting.

Tests were invented for a reason: to be part of an intelligent, informed and structured thought process. Ordering a test and interpreting it requires not only an initial diagnostic question, but a sound understanding of biostatistics, a few fundamentals of which are explained above.

In summary, it is better to avoid self-testing – that is without consulting a trained healthcare professional. When done diligently and with scientific purpose, a test can provide the vital missing piece in the jigsaw puzzle of disease. In contrast, an improperly ordered test result can float around like a single piece of a large puzzle that was never part of any picture in the first place.

(The author is a senior consultant gastroenterologist and deputy medical director, Sunrise group of hospitals)